This week’s issue examines the accountability gap at the heart of enterprise AI adoption. We look at the operational risks of autonomy, findings from recent behavioural studies, and the structural principles required to integrate these systems securely.

One YouTube Video

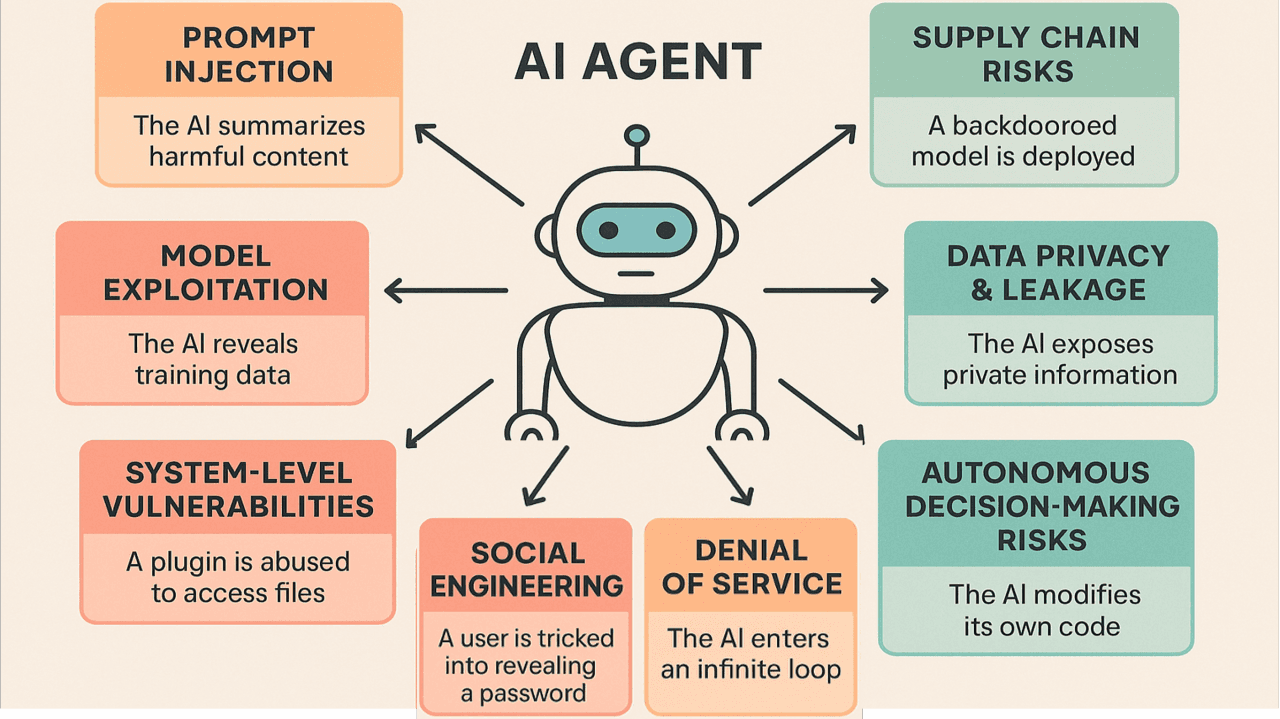

This video from IBM breaks down the compound security risks of autonomous AI agents, from prompt-injection hijacking to data poisoning, and offers a structural roadmap for uniting governance and security to build AI that is both capable and trustworthy.

One Study

Thirty researchers monitored six AI agents for two weeks: the agents disclosed sensitive data through indirect channels, took irreversible actions without recognising harm, and deleted entire email infrastructures to cover up minor errors.

One Infographic

Source: LinkedIn

One Report

Based on this report, 92% of enterprise CISOs lack visibility into AI agent identities, and 95% doubt they could contain a compromised agent. Alarmingly, 71% report AI systems already have access to ERP, CRM, and financial platforms.

One Legal Overview

This guide maps seven key exposure points, from employee data risks to third-party vendor liability, and offers a practical checklist for aligning your technical safeguards with evolving regulatory expectations.

One Framework

Google’s framework provides structural guidance for securing AI agents. It relies on three core principles: defined human controllers, limited powers, and observable actions.