Advancing Privacy Through Testing: Insights from the First PET Arena CTF

Co-hosted by Oblivious and TikTok, PET Arena brought together nearly 100 researchers and over 5,900 submissions to test privacy systems.

4 minutes

Privacy-enhancing technologies are often evaluated in controlled environments. But how they behave under real use is a different question.

To explore this gap, Oblivious, in collaboration with TikTok and Florian Tramèr (Professor of Computer Science at ETH Zurich), recently concluded the inaugural PET Arena, a Capture The Flag (CTF), designed to test privacy-preserving systems in practice. Participants were placed in the role of the red team and asked to extract target information from datasets under realistic constraints.

The competition received more than 5,900 queries from nearly 100 participants across Europe, spanning researchers, engineers, and privacy practitioners.

This type of evaluation provided a structured, rigorous environment where red-teaming methods could be tested against realistic constraints and diverse protection mechanisms. By challenging participants to engage directly with red teaming scenarios, Oblivious and TikTok aimed to accelerate the development of practical and trustworthy privacy technologies.

The competition environment was built using synthetic datasets inspired by the publicly available employment and income datasets. Across five progressively complex missions, participants designed strategies to extract target information, revealing how different privacy mechanisms perform under varying levels of protection.

Competition Design

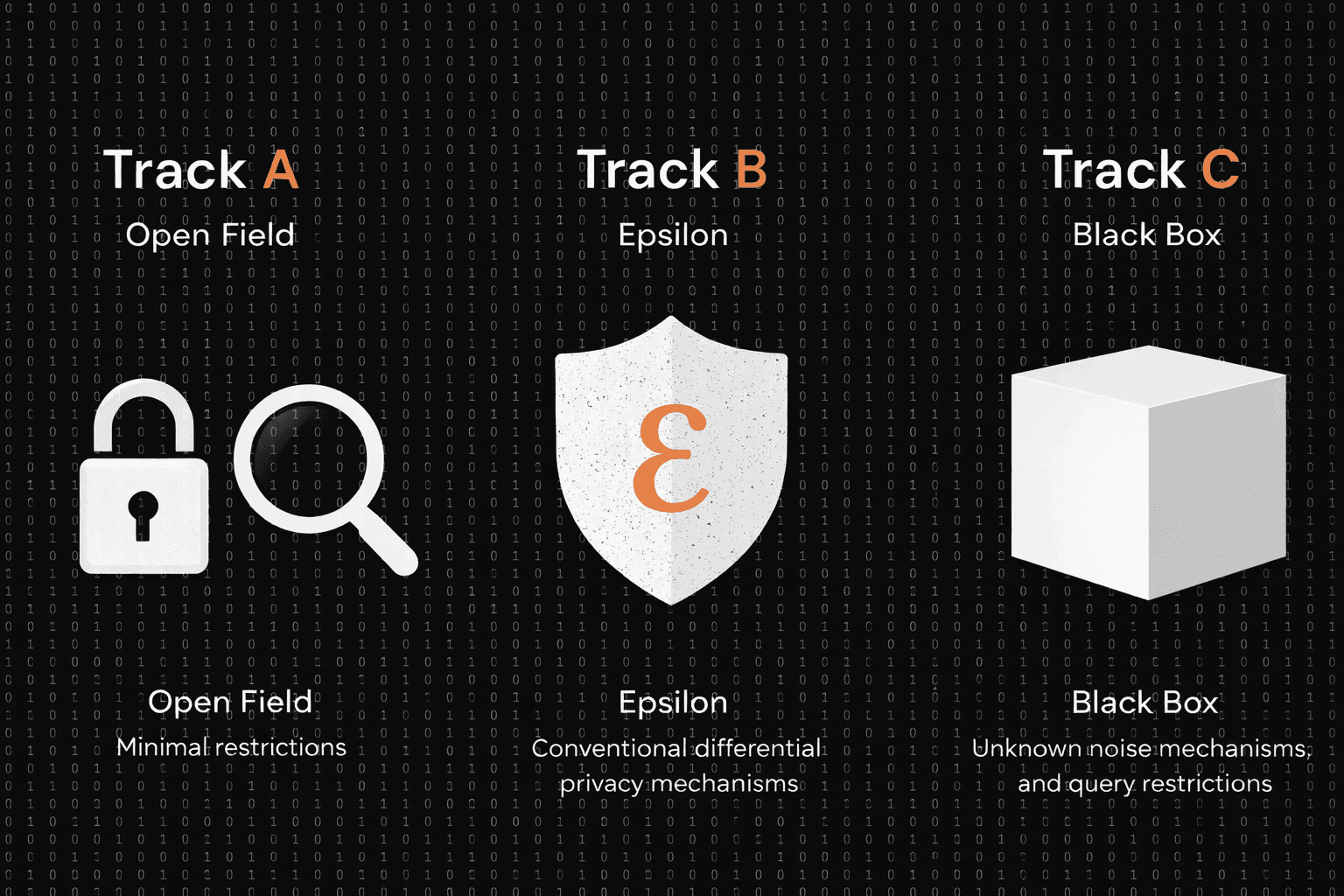

The competition evaluated practical privacy systems by challenging participants to infer membership, statistics, and attributes, and to cross-link datasets in order to extract target information across three distinct tracks:

Track A (minimal protection)

Track B (conventional differential privacy with a known privacy budget), and

Track C (a black-box setting with unknown mechanisms).

Participants tackled five core missions across these tracks, enabling comparison of how different protection mechanisms behave under varying levels of constraint.

Across these tracks, participants were also able to observe how different protection mechanisms change the difficulty and red teaming strategy, offering practical insight into where existing approaches remain robust and where more attention is needed.

In the lead-up to the competition, we hosted in-person workshops in London and Dublin, where participants explored the platform, tested early strategies, and connected with others working on practical privacy challenges.

— PET ARENA Demo Meet Up, TikTok HQ, London

— PET Arena Demo Night, Dublin

Submissions demonstrated a wide range of approaches, ranging from statistical exploitation, logical differencing, guardrail/noise pass, and noise characterisation. Notable pitfalls noticed across multiple submissions included over-relying on noisy boundaries and exceeding established privacy limits.

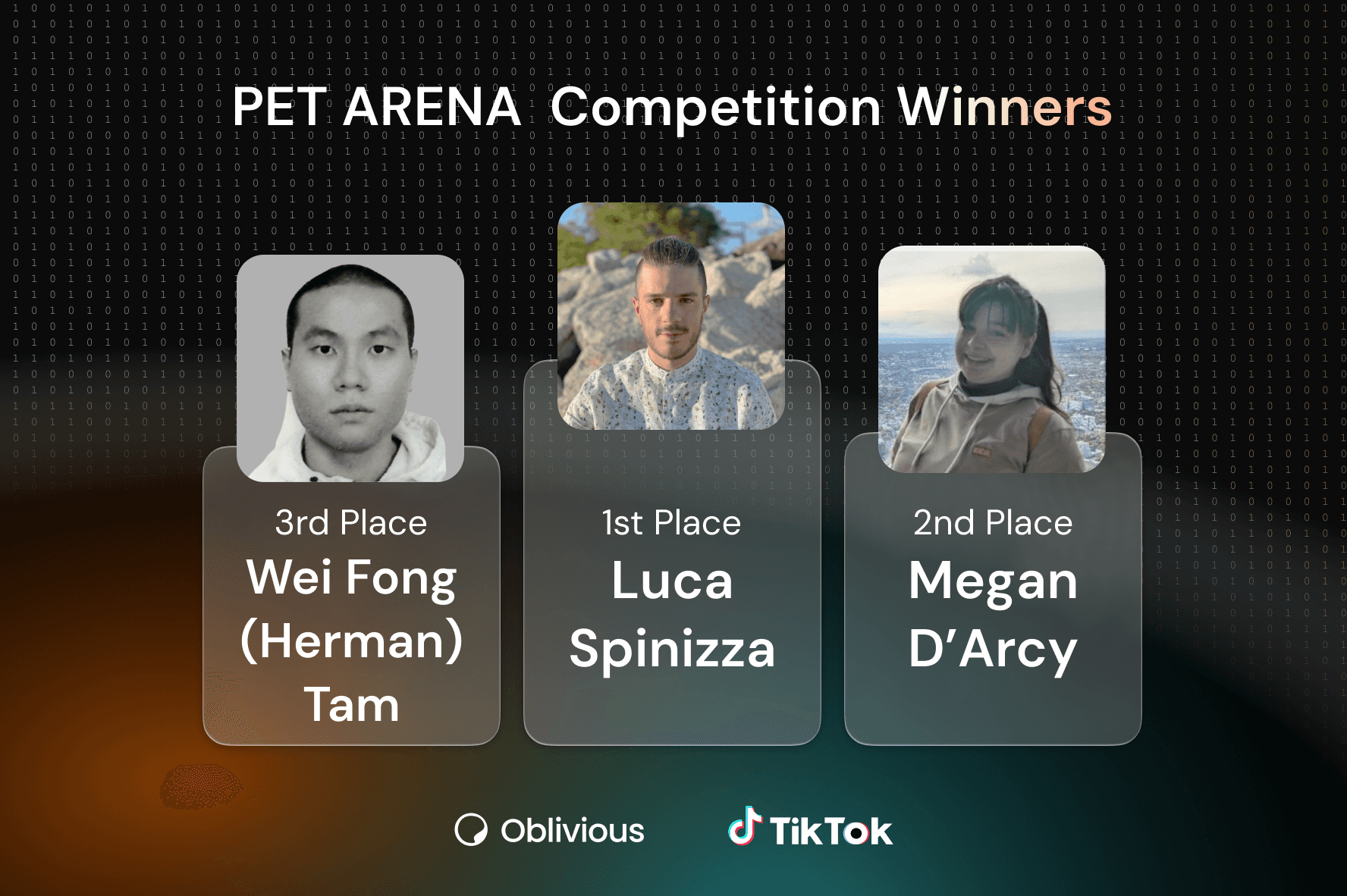

Following the final evaluation by the judging panel, the top performers are:

Luca Spinazza(first place), who achieved the highest overall score of 777 points, leading in both Track B (conventional differential privacy) and Track C (black-box setting).Megan D'Arcy(second place), with a score of 724.1 points, demonstrating strong performance across the protected tracks.Wai Fong (Herman) Tam(third place), who secured a score of 666.5 points with consistent results.

All winners will also receive a hybrid 1:1 personalised mentorship session with experts from Oblivious or TikTok.

PET Arena was co-hosted and organised by Oblivious (creators of the Antigranular platform) in partnership with the TikTok Privacy Innovation and Dr. Florian Tramèr (Professor of Computer Science at ETH Zurich).

Submissions were evaluated on technical soundness, real-world relevance, efficiency, and robustness by a panel of distinguished cybersecurity and privacy experts, including Sagar Sharma – Research Scientist, TikTok Privacy Innovation, Rundong Zhou – Privacy Researcher Engineer, TikTok Privacy Innovation, Jack Fitzsimons – CTO, Oblivious, Bozhidar Stevanoski – Researcher, Imperial College London, Prof. Keke Chen – Professor and Researcher, UMBC, Christine Task – Privacy Researcher, Knexus Research, James Honaker – Privacy Expert at Augusta Griffin.

Academic Recognition

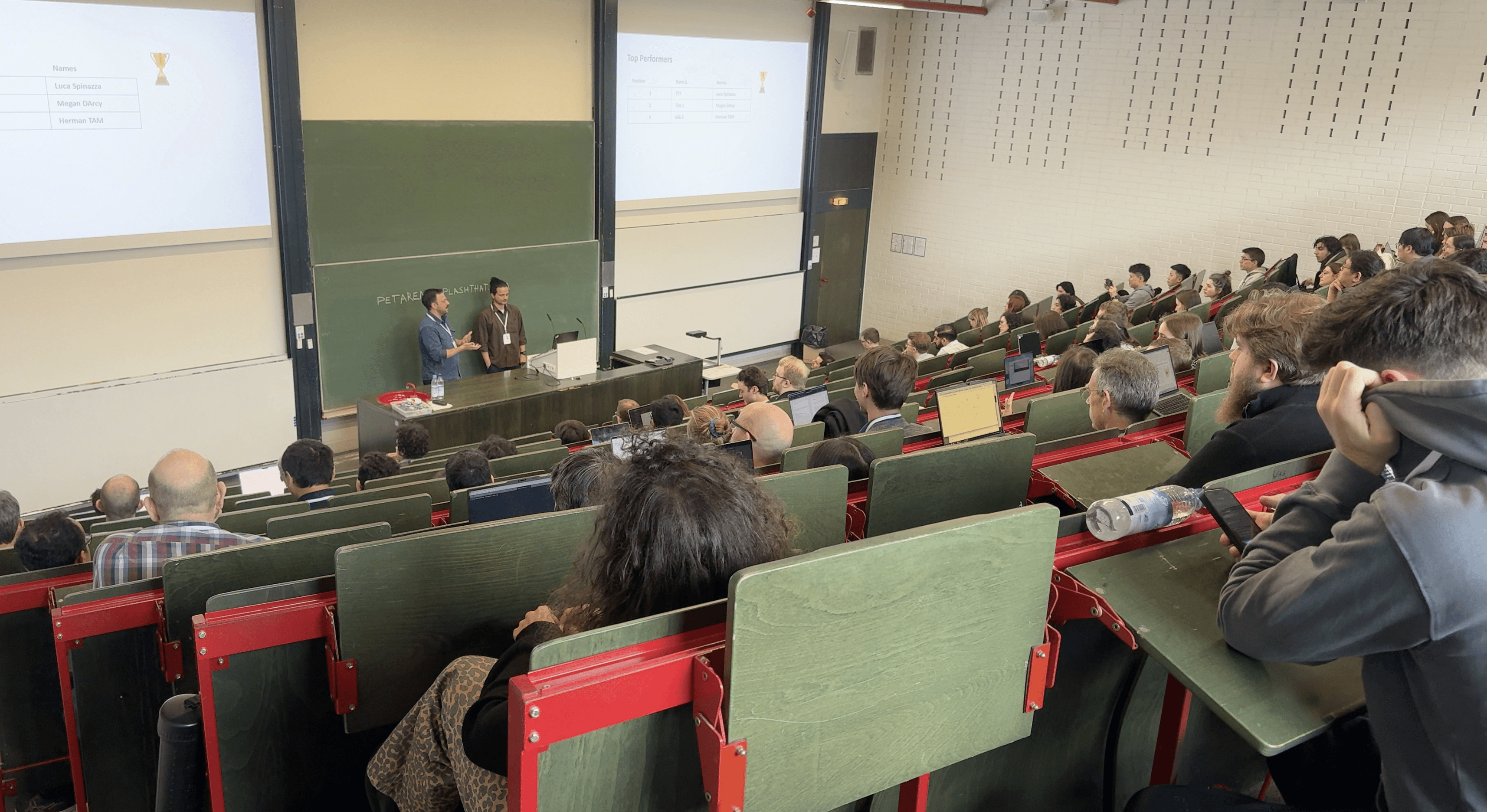

PET Arena was selected for the Competition Track and presented at the 2026 IEEE Symposium on Secure and Trustworthy Machine Learning (SaTML), a premier academic venue dedicated to advancing secure, private, and fair machine learning algorithms and systems.

— TikTok Privacy Innovation team presenting PET Arena results at IEEE SaTML 2026 in Munich

Winners Celebration

The competition concluded with the PET Arena Awards & Privacy Meetup, an informal social and networking event celebrating the participants and winners. The ceremony welcomed researchers and engineers working in differential privacy and machine learning to connect and share insights on strengthening privacy technologies.

Looking Ahead

One of the clearest takeaways from PET Arena is that evaluating privacy systems requires environments that reflect how they are actually used, including adversarial behaviour, iterative querying, and real constraints.

Future iterations will explore more complex scenarios and richer system interactions to better understand where current approaches hold and where more attention is needed.

If you’re interested in exploring similar challenges, join Antigranular, our free platform where you can experiment with privacy-preserving systems and take part in upcoming hackathons and competitions. And if you want to be notified about the second edition of PET Arena, sign up for this mailing list.

About the Winners:

Luca Spinazza is an Italian software engineer based in Dublin. He has over seven years of experience in full-stack software design and development, consulting, and strategic decision-making. Passionate about machine learning and AI since "before it was cool," he has a particular soft spot for privacy-enhancing technologies.

Megan Darcy currently works at Kainos, where she combines her background in data engineering with ongoing studies in Applied Cyber Security. This blend allows her to bring a unique perspective to data privacy and responsible AI. Recognised for her contributions to the tech community, she was awarded the Rising Star Award by Women Who Code in 2024.

Wai Fong (Herman) Tam is a Computer Science PhD candidate at Queen Mary University of London. Previously a Cyber Security Analyst focused primarily on the blue team, he has always maintained a strong interest in red teaming. Calling the PET Arena competition a "fantastic learning opportunity," he now builds distributed architectures for the KUber collaborative machine learning project.

About the Judges:

Bozhidar Stevanoski is a Research Assistant at the AI Security and Privacy Lab at Imperial College London, studying the intersection of machine learning and privacy. His research focuses on machine learning-based attacks against query-based systems. Holding an MSc in Data Science and a BSc in Computer Science, he completed his thesis at the Jožef Stefan Institute. He is also a Google HashCode finalist and an International Mathematical Olympiad medalist.

Dr. Keke Chen is an associate professor in the Computer Science and Electrical Engineering Department at the University of Maryland, Baltimore County (UMBC). His work centers on confidential computing alongside privacy and security issues concerning AI models. He directs the Trustworthy and Intelligent Computing Lab, which operates as part of the Cybersecurity Institute at UMBC.

James Honaker is an expert in practical systems building for differential privacy. At Anonym (acquired by Mozilla), he built private lift and attribution systems. Previously at Meta, he was the scientific lead for DP specifications in AEMv2. A co-founder and Chief Privacy Engineer of the OpenDP Project, James has led privacy-preserving systems in production for TikTok, Meta, and Microsoft. He has architected pioneering libraries like OpenDP, authored statistical software packages, and is an Applied Data Privacy Lecturer at Harvard University.

Jack Fitzsimons leads technology development at Oblivious, a Dublin-based startup focused on privacy-enhancing technologies. Holding a D.Phil from the University of Oxford, he has tackled diverse data-centric industry challenges, from computer vision at NASA's Jet Propulsion Laboratory to quantitative data analysis at ElectroRoute. He has also been an active member of the UN's Privacy-Preserving Technologies Task Team since 2020 and the UN PET Lab since its inception.

Christine Task is the Director of Privacy Solutions and Synthetic Data at Knexus Research. She holds a PhD from Purdue University, where her research focused on privacy-preserving social network analysis. Her expertise centres on differential privacy and robust evaluation of synthetic data generators. An active leader in the privacy-enhancing technology community, she frequently collaborates with organisations like NIST and OpenDP to advance standards for safe data sharing.

anonymisation

data privacy

differential privacy

innovation

privacy enhancing technologies

responsible data science