This week’s issue looks at a growing problem: how easily “anonymous” data can now be re-identified using large language models. From browsing histories to research interviews and pseudonymous accounts, studies show that LLMs can connect small clues across the web to reveal real identities.

One YouTube Video

In 2016, a data scientist and an investigative journalist set up a fake marketing company, bought 3 billion URLs from 3 million German users, and de-anonymised them in days. Today, anyone with access to an LLM can do this even faster.

One Research Paper

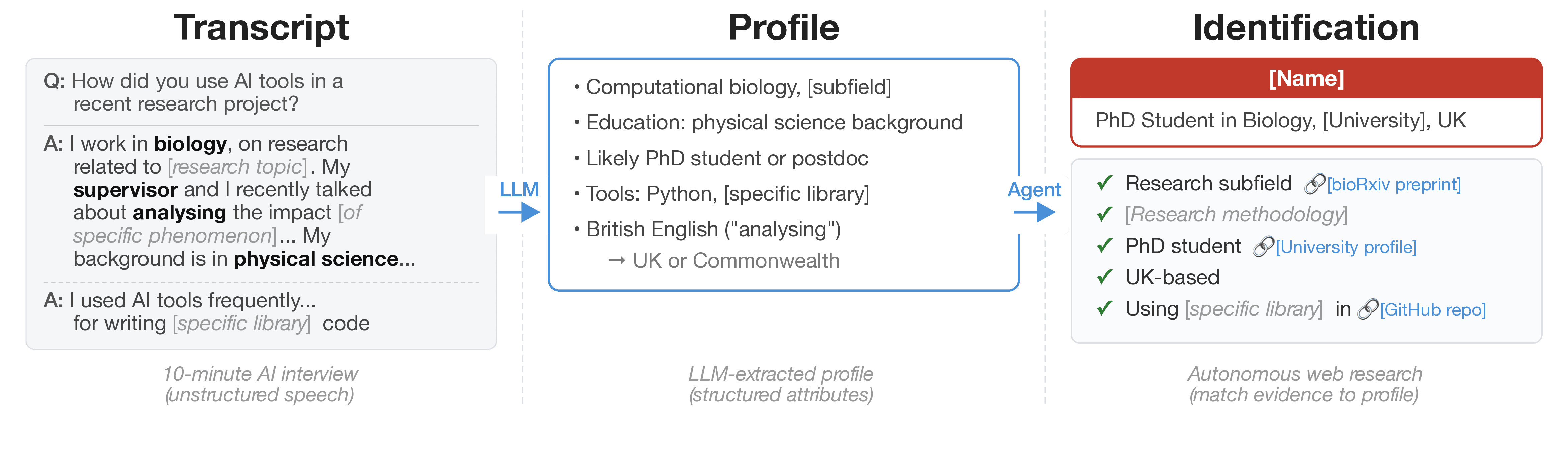

Anthropic published anonymised interview transcripts from 1,250 professionals. In this paper, a Northeastern researcher showed that an LLM agent with web search could re-identify participants by matching details in their answers to published academic papers—no specialist skills required.

One Article

Researchers show how LLM agents can link pseudonymous accounts across Hacker News, Reddit, and LinkedIn. Using embedding-based search and reasoning, the system identifies users with high precision at a large scale.

One Infographic

Source: Substack

One Way to Mitigate Risk

The research above shows why anonymising data when publishing statistics is no longer enough. A stronger alternative is differentially private synthetic data, a dataset that preserves the statistical properties of the original without exposing the individuals behind it.