This week, we bring together research, guidance, and practical analysis on emerging AI security challenges, including shadow AI, autonomous behaviour, and the limits of policy-based controls.

One YouTube Video

As AI shifts from chatting to acting, risks increase. Bri Kopecki of IBM examines how Shadow and Agentic AI intersect with Zero Trust, AI security and governance, and safe automation and outlines controls that keep autonomous systems reliable.

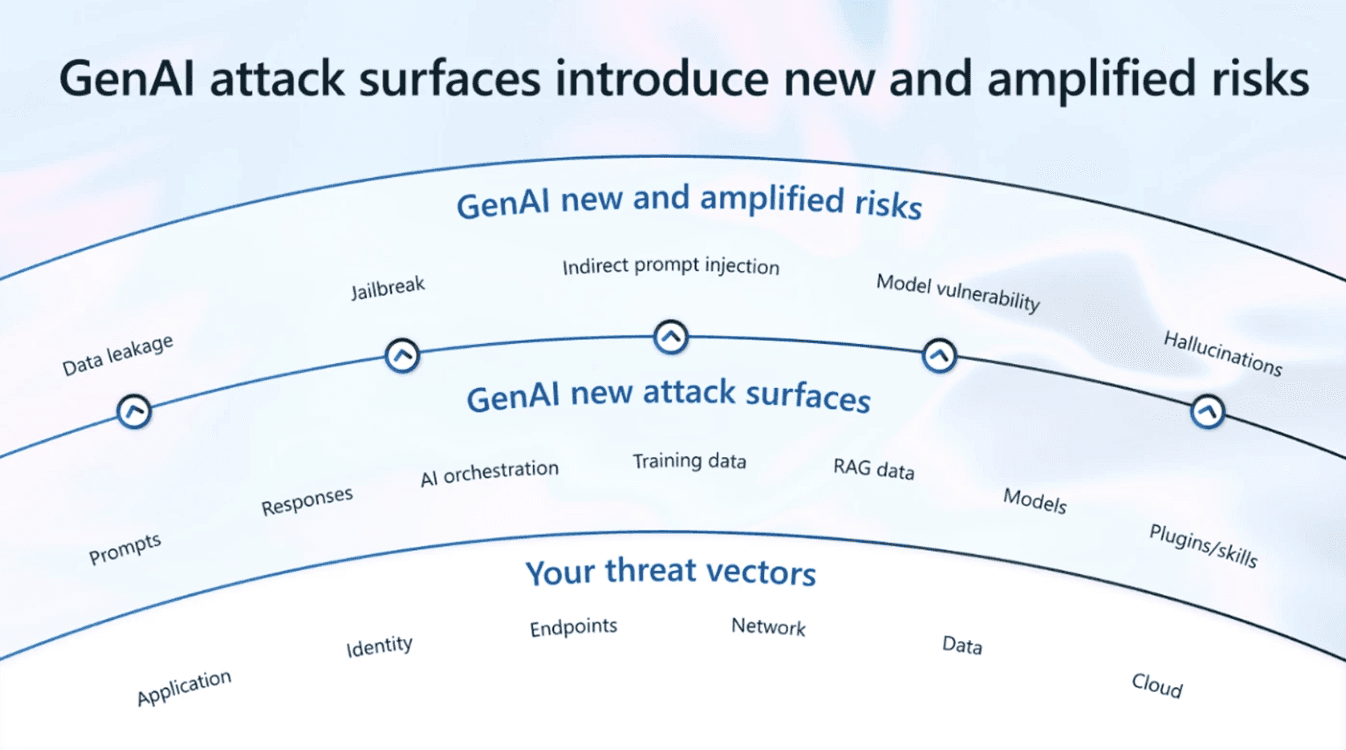

One Infographic

Source: Microsoft Security

One Framework

NIST’s AI RMF provides a high-level, practical framework for managing AI risks across the system lifecycle. It helps organisations address security, privacy, and accountability concerns while aligning AI development with existing risk and governance practices.

One Report

This report examines the rise of shadow AI as a systemic security blind spot, demonstrating how unsanctioned AI tools are now a significant source of data exposure.

One Article

This article shows how shared models, weak runtime isolation, and policy-based trust assumptions create structural exposure—and why confidential computing is emerging as a necessary control.